I hear over and over again in programmer forums, mostly beginner forums, that C++ is hard because you have to manually manage memory! This is not only wrong, it’s dangerous. Whenever you see this comment, be wary about following this persons advice in the future. Why? Simply put, because if you are manually managing memory in C++, you are probably doing it wrong. We will look at why in a moment.

Secondary to the fact you really shouldn’t be manually managing memory all that often in C++, even if you have to, it shouldn’t really be all that hard. Memory management in C++ doesn’t make the language hard, it makes it fragile, their is a big difference. The concepts behind memory management really aren’t rocket science, it’s the kind of thing you can learn in an afternoon. However, screwing it up can easily introduce a bug into your application or cause it to outright crash. This is what I mean by fragile.

So in this post we are going to start with why you shouldn’t be managing memory manually, show you how you actually go about doing this, then finally for those times you absolutely have to, show you the ins and out of basic C++ memory management. One thing I should point out right away is many of the things I discuss in this article didn’t exist until somewhat recently (such as smart pointers). Languages evolve, C++ certainly has. I assume you are using a reasonably current complier, if you aren’t, much of what we discuss may not be applicable to you.

Before we can go too far, there are a few concepts you need to understand. When working in C++, there are three kinds of memory you need to be aware of.

The Stack, the Heap and all the other kinds we are going to pretend don’t exist.

First we will talk about the types we won’t talk about. In this discussion I am oversimplifying things slightly to focus on the stuff that is most relevant 99% of the time. There are other kinds of memory ( globals, statics, registers, etc… ), but until you need to know about them, you can safely ignore them.

That leads us back to the stack and the heap. Let’s start with the stack…

The Stack

The stack is well named, think about a stack of plates at a cafeteria, and you have the basic idea of what the stack is like.

Each time you create a variable on the stack, think of it like adding a plate to the top of this pile. When a variable goes out of scope, think of it like removing a plate from the pile.

Let’s look at it with actual code.

#include <iostream>

void SomeFunction(int a2, int b2)

{

int c=3;

int d=4;

std::cout << a2+b2+c+d;

}

int main(int argc, const char * argv[])

{

int a = 1;

int b = 2;

SomeFunction(a,b);

}

This simple program creates a pair of values a and b and passes their values to the function SomeFunction() which then creates two more variables c and d and adds them all together, printing out the total.

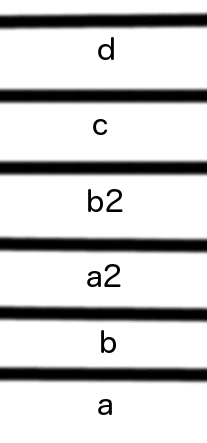

So, what is happening here on the stack? Look at the following diagram and think in terms of the stack of plates (bowls?) above:

As this code runs, first a is added to the stack, then b.

Next copies of a and b are sent as parameters to our function, resulting in a2 and b2 being added to the stack. This is an important concept to grasp. When you pass a standard variable by value, it’s actually only the value that is used, a new variable is created for each parameter.

Then within our function, c and d are created (on the stack) as well.

Now is when the stack starts to make sense… when things are deallocated. See, the stack is very aware of scope and when a variable will die. At the end of our function, d, c, b2 and a2 all go out of scope, so they are removed from the stack, like grabbing plates from the top of a plate stack.

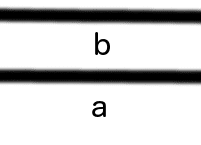

After our function call, our stack will look like:

Then finally our program execution ends, and b and a will be popped from the stack as well.

The nice thing about the stack is, the program knows when a stack variable is no longer needed ( when it goes out of scope ) and gets rid of it for you. In my initial revision of this post, I made the stack sound like a bad place to create your variables and this is very much NOT the case. In fact, unless you have a very good reason ( see the following paragraph ), you should always favour allocating on the stack, it’s a heck of a lot less error prone and often quicker.

The downside to the stack are two fold. First, it has a limited size… depending on your platform and your compiler settings, ranging from KB to a couple of MB in size. I believe Visual C++ 2010 on Windows creates a default stack of 1MB. Next, sometimes you want variables to outlive the scope they are declared in… quite often actually, such as a function that allocates a big chunk of memory to big to efficiently return by value ( and thus creating a copy ). In this case you need…

The Heap

The heap is nothing special, don’t confuse it with the data structure “heap”, they have nothing to do with each other. This “heap” in question is simply slang English… like you might say you have “a heap of clothing”, you have a “heap of memory”. It’s simply a big pool of memory available for your application. Unlike the stack, which is very ordered and organized, the heap is just a gigantic blob of memory.

Now, the bright side to the heap is… theres lots of it. As much memory as your system contains… in fact, probably much more thanks to virtualization. When we are talking about manual memory management, it’s the heap that we are talking about.

So, how do you create memory and destroy memory on the heap? This is where new and delete come in.

Consider this super contrived example:

#include <iostream>

void FillBuffer(char * buffer)

{

for(int i = 0;i < 10000000;i++)

buffer[i] = ‘A’;

}

int main(int argc, const char * argv[])

{

char * buf = new char[10000000];

FillBuffer(buf);

delete [] buf;

}

Here we create our variable buf on the heap, this is obvious from the use of new. It is then passed into the function FillBuffer, which fills it with the character A. Finally it is deleted. There are a few things of note here, two showing the advantages of the heap, and one potential hand grenade.

First this code allowed us to make a 10MB data structure, you simply couldn’t do this on the stack. If you want to see first hand, try running the following code on your computer:

#include <iostream>

int main(int argc, const char * argv[])

{

char buf[10000000];

for(int i =0;i< 10000000;i++)

buf[i] = ‘A’;

}

On MacOS, you get EXC_BAD_ACCESS errors as a result, and that makes sense, as you are trying to allocate more memory ( on the stack… new wasn’t used ) than is available. So, obviously if you have a large amount of data, it has to be on the heap.

The other major advantage to the above code is when you call FillBuffer, only a pointer to the data is passed ( 4bytes in size on 32bit Windows, 8bytes in size on 64bit ) instead of creating a 10MB copy. If this concept doesn’t make a lot of sense right now, bear with me, I will explain what a pointer actually is in a bit more detail shortly.

So, what then is the problem with direct memory management? Well, remember this line:

delete [] buf;

Had I accidentally printed:

delete buf;

We would have just leaked 10 MB of memory! If you don’t manually release(delete) the memory you allocate using a pointer, when the pointer goes out of scope, that memory is lost. In this contrived example, its easy enough to remember a delete for every new, but in a real application, it’s a very simple mistake to make, as is forgetting to include [] when dealing with an array of values! It’s even easier to leak memory when an exception occurs, causing your program to not necessarily run in the order you expected it to.

One thing to keep in mind here, I said earlier that if exists on the Heap, it was allocated with new. This is true but somewhat misleading. For example, when you use many of the standard C++ data structures, such as std::vector, even though you may create the object on the stack internally it is still allocating memory on the heap for it’s own data. It is just taking care of most of the heavy lifting for you.

So, thats a quick view of the stack, the heap and how memory works… now I want you to forget it all!

As a new to C++ developer, every single time you find yourself typing new or delete, it should raise an alarm bell of sorts! Don’t get me wrong, there are reasons to use both, but they should be few and far between. What then is the alternative?

Resource Acquisition is Initialization (RAII)

That sound scary? Don’t worry, its nowhere near as bad as it sounds. RAII is a term/idiom coined by C++’s creator Bjarne Stroustrup. I’ll go with the Wikipedia description:

In this language, the only code that can be guaranteed to be executed after an exception is thrown are the destructors of objects residing on the stack. Resource management therefore needs to be tied to the lifespan of suitable objects in order to gain automatic allocation and reclamation. Resources are acquired during initialization, when there is no chance of them being used before they are available, and released with the destruction of the same objects, which is guaranteed to take place even in case of errors.

Again, this sounds much scarier than it really is. The part that you need to really pay attention to is this one: needs to be tied to the lifespan of suitable objects in order to gain automatic allocation and reclamation.

Remember earlier how I said objects on the stack are automatically disposed when they go out of scope, while new’ed objects on the heap aren’t, and if not manually deleted, will result in a leak? Well, thats where smart pointers come in. Esentially a smart pointer is a variable created on the stack, that creates the heap memory for you. However, when it goes out of scope, it’s destructor will be called and the resources it manages will then be disposed. Let’s take a look at our previous example, just using a smart pointer instead.

#include <memory>

void FillBuffer(std::unique_ptr<char[]> & buffer)

{

for(int i = 0;i < 10000000;i++)

buffer[i] = ‘A’;

}

int main(int argc, const char * argv[])

{

std::unique_ptr<char[]> buf(new char[10000000]);

FillBuffer(buf);

}

I will be the first to admit that the syntax of a smart pointer isn’t the most beautiful thing in the world, but it has many advantages. The first and most obvious… notice we no longer delete the pointer? Don’t worry, this doesn’t result in a memory leak, this is RAII in action. See what’s happening here is, std::unique_ptr lives on the stack and full fills a pretty simple function. On creation, it allocates your memory. When it’s destructor is called, it de-allocates your memory. Since the destructor is guaranteed to be called if an exception occurs, there is no fancy error handling required here, and since the unique_ptr lives on the stack, it will be destroyed ( and thus the memory it holds ) when it goes out of scope.

The second less obvious thing here, notice as a parameter to FillBuffer, our pointer is based by reference? ( & ). This is a by product of the unique_ptr. Just like Highlanders, there can be only one. unique_ptr’s will prevent copies of the pointer from being made. Sometimes this is desired behaviour, but sometimes you want to have multiple pointers to the same object. In that case you can used std::shared_ptr. Shared pointers work a bit differently, the allow multiple different pointers to point to the same thing and keep a reference count of the number of things pointing at your object. Each time one is destroyed, the reference count is reduced, if the reference count hits zero, the memory is freed. unique_ptr and shared_ptr both perform different functions, but both work to make memory management more controlled, more predictable and less fragile. Once you get over the syntax, they actually result in a lot less work too, as your error handling is greatly simplified, you don’t have to watch memory usage so closely, etc…

TL;DR version. Smart pointers essentially wrap your heap based memory allocation in a stack based object. This objects creation and destruction manage the lifecycle of your memory, simplifying error handling and making memory leaks a great deal less likely.

A small primer of C++ style memory management

It’s possible at this point that you have very little prior experience with memory management at all, so to understand why something is better, it can prove handy to understand the way that is worse. It’s also quite possible that the idea of a pointer is completely alien to you, so let’s have a quick primer of what a pointer is and how it works.

Let’s look at a very simple program:

#include <iostream>

int main(int argc, const char * argv[])

{

int * i = new int();

*i = 42;

std::cout << *i;

delete i;

}

Here we are creating a pointer i that points to type int.

This is an important concept to get your head around. A pointer is NOT the same type as what it points to. I will explain this more in a moment, for now let’s carry on.

You declare a pointer type using the asterix ‘*’ operator. As we saw earlier on, to allocate memory on the heap you use the new operator, therefore the line:

int * i = new int();

Creates a pointer to type int named i and allocates space for an int on the heap using new.

Somewhat confusingly, the * operator is also used to access the data a pointer points to. One important thing to keep in mind, a pointer is ultimately a location in memory. On a 32 bit system there are 4,294,967,295 bytes (2^32) of memory and a pointer is simply the location of one of those bytes. If it helps, you can simply think of pointers as bookmarked locations in memory. To actually read what is at the location, you “dereference” it, which is where the * operator comes in. So the line:

*i = 42;

Is saying, at the memory location pointed to by i, write the value 42. Since we told the compiler what type of data our pointer points to, it knows how to do the rest. Again, you dereference a pointer to read it’s contents too, like we do when printing it out cout. Once again, the compiler knows what the memory pointed to contains because it knows what type of data the pointer points to.

Let’s go back in time a moment, remember when I said a pointer is not the same type as what it points to… this is very important to realize. If you say

char * c = new char();

Just like before, you are creating a pointer to type char NOT a char. This little sample might make the difference a bit more clear.

#include <iostream>

int main(int argc, const char * argv[])

{

char c = ‘A’;

int i = 42;

char * ptrC = new char();

*ptrC = ‘A’;

int * ptrI = new int();

*ptrI = 42;

std::cout << “char c has value of “ << c << ” is located at “ << static_cast<void*>(&c) << ” and is “ << sizeof(c) << ” bytes” << std::endl;

std::cout << “int i has value of “ << i << ” is located at “ << static_cast<void*>(&i) << ” and is “ << sizeof(i) << ” bytes” << std::endl;

std::cout << “char ptrC has value of “ << *ptrC << ” is located at “ << static_cast<void*>(ptrC) << ” and is “ << sizeof(ptrC) << ” bytes” << std::endl;

std::cout << “int ptrI has value of “ << *ptrI << ” is located at “ << static_cast<void*>(ptrI) << ” and is “ << sizeof(ptrI) << ” bytes” << std::endl;

}

(And yes, this example leaks memory).

Here is the results of running this application on 64bit Mac OSX, if you are on a 32bit OS, your results will be slightly different.

char c has value of A is located at 0x7fff5fbff8bf and is 1 bytes

int i has value of 42 is located at 0x7fff5fbff8b8 and is 4 bytes

char ptrC has value of A is located at 0x1001000e0 and is 8 bytes

int ptrI has value of 42 is located at 0x100103a60 and is 8 bytes

As you can see, both of our pointers are 8 bytes ( 64bit OS, 2^64 == 8 bytes ), even though the data they point to is substantially smaller. In the case of a char pointer, the pointer is actually 8 times larger than the data it points to! As I mentioned earlier, each pointer is actually just a location in memory. That is what the 0x____________ represents. This is a 64 bit address in hexadecimal form.

One other thing you might have noticed is even standard data types have a memory address, you can access the location of a variable in memory using the address of operator ( & ). This makes the following possible:

#include <iostream>

int main(int argc, const char * argv[])

{

int i = 42;

int *pointerToI = & i;

std::cout << “The answer to the meaning of life is “ << *pointerToI << std::endl;

if(pointerToI == &i)

{

std::cout << “Memory locations are the same” << std::endl;

}

}

Which will print:

The answer to the meaning of life is 42

Memory locations are the same

You need to be careful with ownership here, there are a number of potential pitfalls. If you point to a stack variable that goes out of scope, you are in for a world full of hurt, consider:

#include <iostream>

int main(int argc, const char * argv[])

{

int * meaningOfLife = NULL;

{

int i = 42;

meaningOfLife = &i;

}

std::cout << *meaningOfLife << std::endl;

}

The problem here is, meaningOfLife is now pointing at a memory address that is no longer valid. i ceased to be when it went out of scope. Now the most insidious part… if you run the above code, it will work and appear to display proper results. Just because a location in memory is no longer valid, doesn’t mean it doesn’t still hold the correct data, memory isn’t blanked when it is released. This means your pointer will appear to work properly… until something reuses that address, then KABOOM!

Pointers to Pointers

Let’s take a look at one more common hang up with C++ memory management, pointers to pointers. We are getting awfully close to an inception moment here. Consider:

#include <iostream>

int main(int argc, const char * argv[])

{

int ** pointerToPointerToInt;

int * pointerToInt = new int(42);

pointerToPointerToInt = &pointerToInt;

std::cout << **pointerToPointerToInt << std::endl;

int * pointerToDifferentInt = new int(43);

*pointerToPointerToInt = pointerToDifferentInt;

std::cout << **pointerToPointerToInt << std::endl;

}

As you can see, you can actually have pointers to pointers, this is done using multiple * operators. In fact, you can have pointers to pointers to pointers to pointers if you really wanted to, and that would use ****.

Otherwise, a pointer to a pointer works almost identically to a normal pointer. The only difference is, when you dereference a pointer to a pointer using the * operator, you are left with an ordinary pointer. You have to dereference it twice to get what it is pointing at. In the above example, when we wanted to change the pointer our pointerPointer pointed at, we dereferenced it a single time, but when we wanted to access the actual value pointed at, we dereferenced it twice. Pointers to pointers aren’t actually all that uncommon, a linked list is often implemented this way… fortunately the logic is consistent.

The Array… think of it as another pointer…

On last thing to touch on… the array. You may recall earlier when we created our char buffer, we actually did it using the array operator. At the end of the day, an array is mostly just trickery over a continuous block of memory. When you say:

char alphabet[24];

You are actually saying “give me a block of memory 24 chars long”. So pointers are arrays can do some rather neat things…

#include <iostream>

int main(int argc, const char * argv[])

{

const char alphabet[] = { ‘A’,‘B’,‘C’,‘D’,‘E’,‘F’,‘G’,‘H’,‘I’,‘J’,‘K’,‘L’,‘M’,‘N’,‘O’,‘P’,

‘Q’,‘R’,‘S’,‘T’,‘U’,‘V’,‘W’,‘X’,‘Y’,‘Z’ };

const char * letter = &alphabet[0];

std::cout << *letter << std::endl;

for(int i = 0; i < 26; i++)

std::cout << *(letter++);

letter = &alphabet[0];

std::cout << std::endl << letter[5] << std::endl;

}

Run this code and we will see:

A

ABCDEFGHIJKLMNOPQRSTUVWXYZ

F

For the record, almost everything you see above IS A VERY BAD IDEA! This is the kind of code we are trying to avoid, as it is horrifically fragile, but lets take a look at what we are doing here…

First we create a const char array holding the characters of our alphabet. You cannot take the location of an array…

&alphabet;

An array is just a chunk of memory, if you want it’s “location” you actually take the location of the first item in the array, that is exactly what:

const char * letter = &alphabet[0];

Does, this creates a pointer to type char and points it at the first character in our alphabet array. As you can see, when we print it out we get A as expected.

Next we loop through the letters of alphabet printing our dereferenced pointer value. You may notice each iteration we ++ our pointer… welcome to pointer arithmetic. Remember before when I said a pointer was simply a location in memory, and an array was a continuous block of memory, well, you can iterate between pointer items using arithmetic. The line:

(letter++)

Is functionally the same as

letter = letter + sizeof(char);

You may notice later on, we access our pointer like it was an array, when we called:

std::cout << std::endl << letter[5] << std::endl;

The array subscript operator ( [ ] ) is functionally equivalent to doing the following

std::cout << std::endl << letter[5 * sizeof(char)] << std::endl;

Be very with pointer arithmetic and using the subscript operator though, as there are absolutely no bounds checking done, so there is nothing to prevent you from trying to access a location that doesn’t exist.

Hey, wait a minute here!!!

You may have noticed we use the * operator to both declare and dereference pointers. We also use the & operator to both declare a reference as well as to get the address of a variable… how does that work?

Well, it’s all about context. When declaring a variable or defining parameters, * indicates a pointer while & indicates a reference. However, in all other scenarios, the operators have their alternate meaning. I personally think this was a bit of a mistake for clarity reasons.

Conclusion

Ok… so maybe it isn’t EASY per say, but memory allocation in C++ isn’t the black magic it is made out to be. Especially because, 99% of the time, you should be able to completely ignore the later half of this document. More to the point, if you stick to the standard datatypes like vector, map and string, they take care of all of this stuff for you!

If you find yourself using new, delete or working with a non const pointer, it should be an alarm bell of sorts. Stop, take a closer look at your code and ask yourself “could I accomplish this task using a standard type?” or “would a reference work instead?”. If the answer to either question is true, you are almost certainly better off refactoring your code.